|

I am guessing it has to do something with how the hypervisor is assembling the packets and sending them to our FlashArray. I am not sure I have ever witnessed MTU reporting such a drastic difference in performance, in fact (depending on load) I have seen MTU increase decrease performance. IOmeter Test (75% Read, 25% Write at 32k IO using a single thread): 800MB/s - 1.2GB/s IOmeter Test (75% Read, 25% Write at 32k IO using a single thread): 250MB/s - 350MB/s If we look below here are the results:įile Copy within Windows VM: 50MB/s - 120MB/s

The answer isn't quite what I expected MTU. Hello nickdd - I believe we are making some progress here. Tested the network throughput in the VM with iperf and getting the maximum network speed: 9.90 gbit/s.Īny ideas how to work this out and get decent performance ? The esxi's and storage server were only hosting the test machine, so no other workload was active.

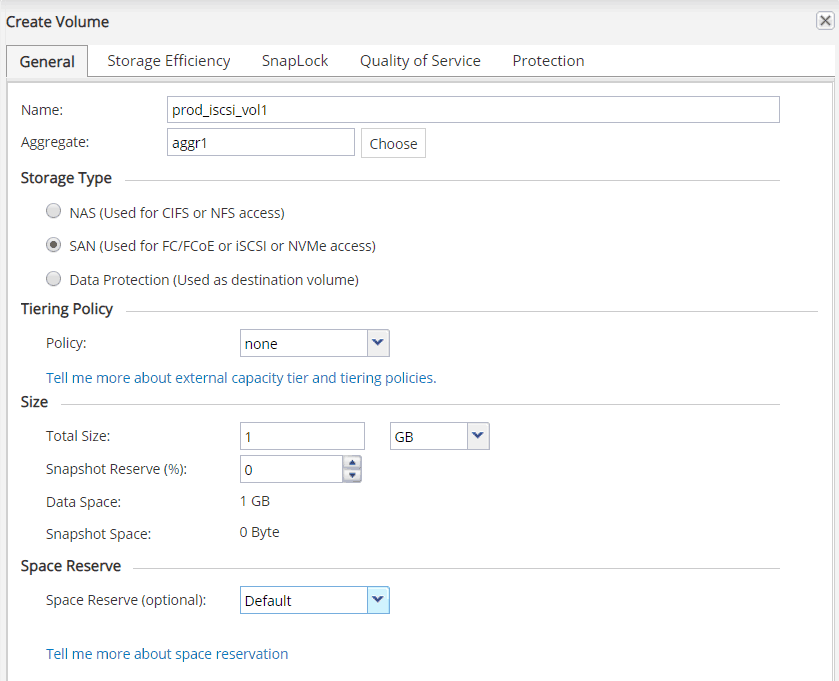

Set up the iscsi target as VMFS5 datastore and then added a virtual disk to my VM and test speed: 260 MB/Sįor C I expected somewhat the same performance as B, but already lose 325 MB/s there which seems to much to be only the virtualization overhead ? The same, default, iscsi settings were used.įor this is just too slow, only 1/3 of the speed I'm getting compared to test B.

I've done some testing to establish a non-vmware baseline:Ī: writing to local storage (raid array): 830 MB/sī: mounted the iscsi target on other physical linux machine: 825 MB/sĬ: mounted the iscsi target in a VM with vmxnet3 NIC: 500 MB/s Ubuntu 14.04 on the storage server (physical machine) as the iscsi target Megaraid 9266-8i raid controller with 8 x 4 TB sas disks in RAID10. Dell N4032 10 gbit switches (all jumbo frames etc) I'm unhappy with the throughput of my iscsi datastore in my vsphere environment.

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed